Use cases_

Real-Time ETL and CDC Streaming

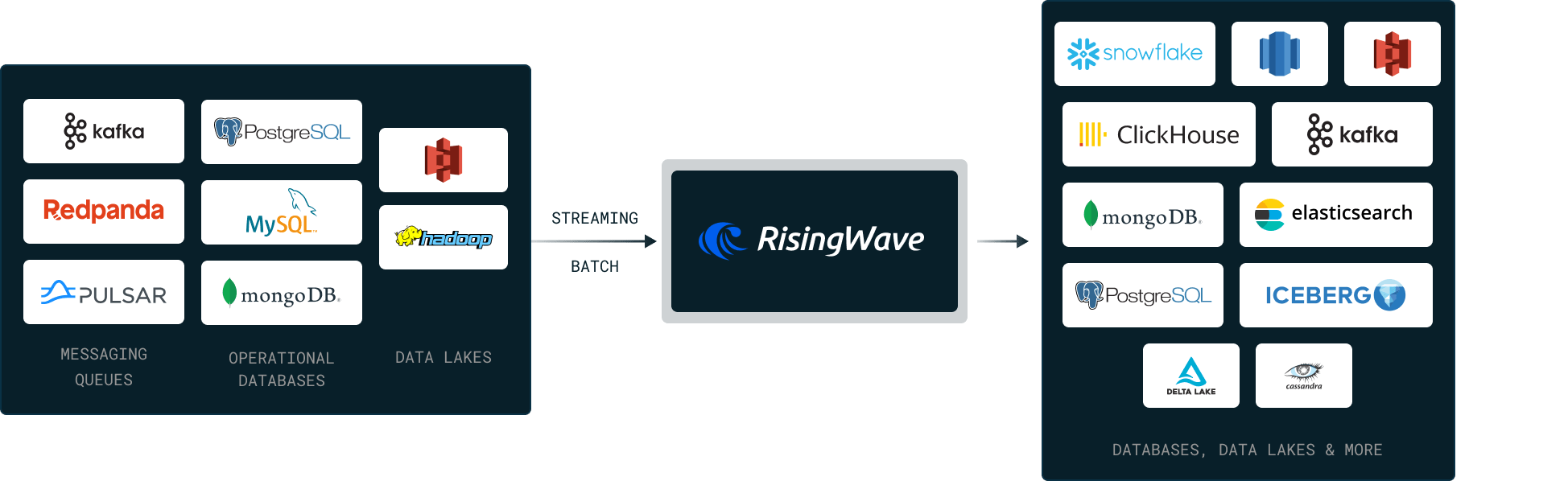

RisingWave replaces batch ETL with continuous streaming pipelines using SQL. Ingest CDC from PostgreSQL and MySQL, transform with multi-way joins, and deliver to downstream systems in real time.

Request a demo

Request a demo

What is real-time ETL?

Real-time ETL (extract, transform, load) continuously moves and transforms data between systems as it is generated — replacing batch ETL jobs that run on hourly or daily schedules. With real-time ETL, data is available for analysis within seconds of being created, enabling responsive decision-making and event-driven architectures.

What are the challenges of real-time ETL?

Volume and velocity: Real-time ETL must handle millions of events per second from databases, message queues, and APIs without falling behind.

Exactly-once delivery: Data must not be duplicated or lost during processing, even when failures occur.

Complex transformations: Multi-way joins, aggregations, and enrichment across different data sources are computationally intensive at streaming speed.

Schema evolution: Source schemas change over time. The ETL pipeline must handle schema changes without manual intervention or downtime.

Operational complexity: Traditional solutions like Flink require Java expertise, custom operators, and dedicated DevOps teams to maintain streaming pipelines.

Why use RisingWave for real-time ETL?

RisingWave replaces complex Flink or Spark streaming pipelines with standard SQL. Define your entire ETL pipeline — extract, transform, and load — using PostgreSQL-compatible SQL queries.

Extract: Ingest data from 50+ sources including PostgreSQL (CDC), MySQL (CDC), MongoDB (CDC), Apache Kafka, Amazon Kinesis, Google Pub/Sub, and S3. RisingWave handles exactly-once CDC with TOAST column support and out-of-order event handling.

Transform: Use streaming SQL for multi-way joins, time-windowed aggregations, filtering, and enrichment. Define transformations as materialized views that update continuously as new data arrives.

Load: Deliver processed data to Apache Iceberg, Snowflake, ClickHouse, PostgreSQL, Kafka, Elasticsearch, Redis, and other downstream systems. Data arrives within seconds, not hours.

No Java required: Unlike Flink, RisingWave uses SQL as its primary interface. Your data engineers can build and maintain streaming ETL pipelines without learning Java or managing JVM clusters.

Exactly-once semantics: RisingWave guarantees exactly-once processing with consistent snapshot reads, ensuring data accuracy across the entire pipeline.

Sub-second failure recovery: State is persisted in S3-compatible object storage. Recovery takes seconds, not the minutes or hours required by Flink's checkpoint-based recovery.

Sample code

RisingWave allows users to continuously extract, transform, and load data from data sources to destinations.

Step

1

Create a source in RisingWave to receive event streams from your Kafka topic.

-- Ingest event streams from Kafka

CREATE SOURCE IF NOT EXISTS cart_event (

cust_id VARCHAR,

event_time TIMESTAMP,

item_id VARCHAR

)

WITH (

connector='kafka',

topic='cart-events', ...

) FORMAT PLAIN ENCODE JSON;

Step

2

Create a table in RisingWave to capture the latest product catalog from PostgreSQL.

-- Ingest CDC from PostgreSQL

CREATE TABLE product_catalog (

item_id varchar,

name varchar,

price double precision,

PRIMARY KEY (item_id),

)

WITH (

connector = 'postgres-cdc',

hostname = '127.0.0.1', ...

);

Step

3

Configure a sink in RisingWave to perform enrichment on the data and directly transfer the enriched stream to another Kafka topic.

-- Perform enrichment and directly sink to Kafka

CREATE SINK data_enrichment AS SELECT

c.cust_id,

c.event_time,

p.name,

p.price

FROM

cart_event c

JOIN

product_catalog p

ON

c.item_id = p.item_id

WITH (

connector='kafka',

topic='enriched-cart-events', ...

)

FORMAT PLAIN ENCODE JSON;

Try Now

Try Now